What Is HappyHorse-1.0? The Unknown AI Video Model That Just Topped Every Major Benchmark

Last updated: Apr 2026

A model nobody saw coming just took the #1 spot on all four video generation leaderboards tracked by Artificial Analysis — the most widely cited independent evaluation platform for AI video.

Its name: HappyHorse-1.0.

Not from Google. Not from OpenAI. Not from ByteDance. From a spun-off lab inside an e-commerce company.

Here's what this article will give you in ~8 minutes: a clear picture of what HappyHorse-1.0 actually is, who built it, exactly how it performs against every major competitor (with full benchmark tables), what we still don't know, and what this means if you work with AI video in any capacity.

Let's get into it.

HappyHorse-1.0 at a Glance

HappyHorse-1.0 is an AI video generation model that supports:

Text-to-video synthesis

Image-to-video synthesis

Integrated audio generation

It was released in April 2026 and immediately claimed the top rank across every Artificial Analysis video leaderboard — a first for any single model.

What makes it stand out isn't one flashy feature. It's the consistency: HappyHorse-1.0 outperforms models from ByteDance (Seedance 2.0), KlingAI (Kling 3.0), Google (Veo 3.1), xAI (Grok), and others — across multiple task types simultaneously.

Quick clarification: Topping benchmarks doesn't automatically make a model the best tool for every workflow. Benchmarks measure human preference in controlled head-to-head comparisons. They don't measure API uptime, pricing, or edge-case reliability. But when a model leads all four leaderboards across 31,000+ evaluation samples, it's no longer noise. It's signal worth understanding.

As of now, the API is listed as "Coming soon" — no public access yet.

Who Built HappyHorse-1.0?

Here's the part that caught the AI community off guard.

You'd expect the world's top-ranked video model to come from a well-funded, pure-play AI research lab. Instead, HappyHorse-1.0 was built by a team led by Zhang Di, originally operating inside Taotian Group's Future Life Lab.

What's Taotian Group? It's the entity behind Taobao and Tmall — Alibaba's core e-commerce platforms. The Future Life Lab was built under the ATH-AI Innovation Division, an internal R&D unit. It has since spun off as an independent entity.

So is this just "Alibaba's video model" with a different name?

Not exactly. The team has separated from the parent organization. But their heritage matters: years of access to Alibaba-scale infrastructure, data pipelines, and engineering talent clearly provided the foundation to build something at this level.

It's a pattern worth watching. In AI, the most disruptive entrants often aren't the ones with the biggest research headcount. They're the ones with the best applied engineering muscle — teams that have been shipping products at scale and bring that discipline to model development.

Think of it this way: Google DeepMind has 1,000+ researchers. But a focused team of ~50 engineers who've been building real-time recommendation systems for a billion users? They understand optimization, infrastructure, and reliability at a level that pure research labs sometimes don't.

The Benchmarks: Full Performance Breakdown

Now, let's look at what actually happened on the leaderboards. This is the data that made HappyHorse-1.0 impossible to ignore.

The rankings below are sourced from Artificial Analysis (April 2026). Scores are ELO-based, meaning they're derived from head-to-head human preference comparisons — real people watching video outputs side by side and picking the better one.

1. Text-to-Video (No Audio)

| Rank | Model | Creator | ELO Score | Samples |

|---|---|---|---|---|

| 🥇 1 | HappyHorse-1.0 | HappyHorse | 1,379 | 8,819 |

| 2 | Dreamina Seedance 2.0 720p | ByteDance Seed | 1,273 | 8,426 |

| 3 | SkyReels V4 | Skywork AI | 1,245 | 5,956 |

| 4 | Kling 3.0 1080p (Pro) | KlingAI | 1,242 | 5,390 |

| 5 | Kling 3.0 Omni 1080p (Pro) | KlingAI | 1,230 | 4,879 |

Gap over #2: +106 ELO points.

How big is that? In chess ELO terms, a 100-point gap is roughly the difference between a strong club player and a national-level competitor. It's not a coin flip — it's a tier.

2. Image-to-Video (No Audio)

| Rank | Model | Creator | ELO Score | Samples |

|---|---|---|---|---|

| 🥇 1 | HappyHorse-1.0 | HappyHorse | 1,413 | 9,056 |

| 2 | Dreamina Seedance 2.0 720p | ByteDance Seed | 1,356 | 4,656 |

| 3 | grok-imagine-video | xAI | 1,332 | 6,299 |

| 4 | PixVerse V6 | PixVerse | 1,318 | 9,441 |

| 5 | Kling 3.0 Omni 1080p (Pro) | KlingAI | 1,298 | 4,741 |

Gap over #2: +57 ELO points.

This is HappyHorse-1.0's highest absolute score (1,413) across all categories. Image-to-video is often where models reveal weaknesses — maintaining motion coherence while respecting the source image is technically demanding. A strong I2V score suggests robust spatial understanding.

3. Text-to-Video (With Audio)

| Rank | Model | Creator | ELO Score | Samples |

|---|---|---|---|---|

| 🥇 1 | HappyHorse-1.0 | HappyHorse | 1,225 | 6,684 |

| 2 | Dreamina Seedance 2.0 720p | ByteDance Seed | 1,222 | 7,873 |

| 3 | SkyReels V4 | Skywork AI | 1,142 | 5,226 |

| 4 | Kling 3.0 Omni 1080p (Pro) | KlingAI | 1,108 | 5,346 |

Gap over #2: +3 ELO points.

Razor-thin. This tells us something important: native audio generation is the new competitive frontier, and no model has pulled away yet. All top players are converging here.

4. Image-to-Video (With Audio)

| Rank | Model | Creator | ELO Score | Samples |

|---|---|---|---|---|

| 🥇 1 | HappyHorse-1.0 | HappyHorse | 1,162 | 6,896 |

| 2 | Dreamina Seedance 2.0 720p | ByteDance Seed | 1,160 | 4,831 |

| 3 | SkyReels V4 | Skywork AI | 1,084 | 5,524 |

| 4 | Veo 3.1 Fast | 1,075 | 4,680 |

Gap over #2: +2 ELO points.

Another near-tie. But the pattern holds: HappyHorse-1.0 is #1 in every category.

Reading the Full Picture

So what does the complete data tell us?

In pure video quality (no audio): HappyHorse-1.0 has a commanding lead — 57 to 106 ELO points ahead. This is its strongest zone.

In audio-integrated video: The lead narrows to 2–3 points. This is a dead heat where the entire field is still maturing.

Across all four leaderboards combined: Over 31,000 evaluation samples. This isn't a fluke on a small test set.

The core takeaway from the benchmarks: HappyHorse-1.0 is definitively the strongest model for pure video generation, and competitive-to-leading for the newer audio-video combined task.

HappyHorse-1.0 in Action: Sample Gallery

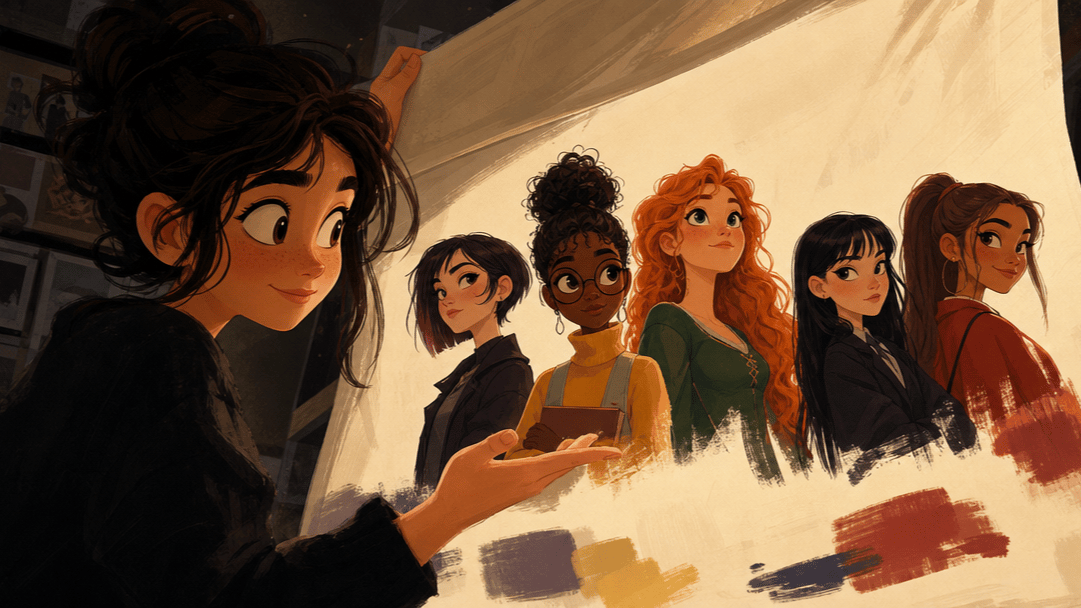

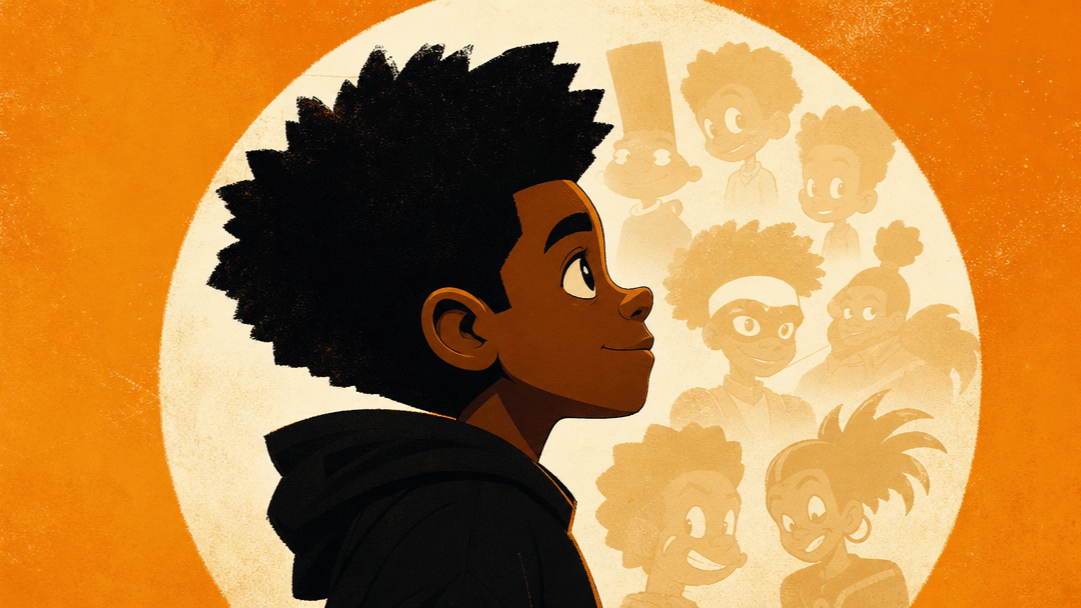

3D Character Animation

Photorealistic Cinematics

Nature & Macro Detail

Human Emotion & Expression

Anime & Cartoon Styles

Lifestyle & Food

3D Character Animation

Photorealistic Cinematics

Nature & Macro Detail

Human Emotion & Expression

Anime & Cartoon Styles

Lifestyle & Food

What We Still Don't Know

Benchmarks are one lens. Here's what's missing before anyone can call HappyHorse-1.0 the definitive best tool:

No public API yet. "Coming soon" is all we have. No launch date confirmed.

No technical paper. No architecture details, training data disclosure, or methodology breakdown has been published.

Resolution and duration specs: unconfirmed. Max resolution, frame rate, and video length are not listed.

No pricing. Without a live API, cost comparisons against competitors (e.g., SkyReels V4 at ~$7.20/min, Grok video at ~$4.20/min) are impossible.

Open-source status: unknown. Not listed under Artificial Analysis's "Open weights" filter.

Why this matters: A model that scores highest but costs 10x more or has 50% API uptime won't dominate real-world usage. Performance is necessary but not sufficient. We'll update this article as these details emerge.

What HappyHorse-1.0 Signals About the AI Video Market

Beyond the model itself, HappyHorse-1.0's emergence tells us three things about where the industry is heading.

The frontier isn't locked up by the usual suspects

Google, OpenAI, and ByteDance have the biggest budgets. But the #1 video model just came from a team that spun out of a shopping platform's R&D lab. If you're building in this space — as a startup, an open-source contributor, or a corporate innovation team — this should be encouraging. The door is open.

China's AI video ecosystem is pulling ahead

Look at the text-to-video leaderboard again. Four of the top five models are from Chinese teams: HappyHorse, ByteDance Seed, Skywork AI, KlingAI. This isn't one outlier — it's a pattern. China's AI video generation capability is not just competitive; on current benchmarks, it's leading.

Audio + video integration is the next battleground

Artificial Analysis now tracks separate leaderboards for "with audio" and "without audio." That split exists because the market is moving toward fully integrated audiovisual generation. HappyHorse-1.0's slim leads in the audio categories suggest it was designed with this multimodal future in mind — but so was everyone else. The gaps will shift. This race is just starting.

FAQ

Key Takeaways

HappyHorse-1.0 is the current #1 AI video model across all four Artificial Analysis leaderboards — text-to-video, image-to-video, with and without audio — evaluated over 31,000+ samples.

Its dominance is clearest in pure video generation (no audio), where it leads by 57–106 ELO points. In audio-integrated categories, the field is converging — margins are just 2–3 points.

The team's origin is unexpected. Built by a group that spun out of Alibaba's e-commerce R&D infrastructure, not a traditional AI research lab. This signals that applied engineering teams can compete at the absolute frontier.

Major unknowns remain. No public API, no pricing, no technical paper, no confirmed specs. Benchmark leadership does not yet equal real-world accessibility.

Watch this space. When the API launches, cost and reliability data will determine whether HappyHorse-1.0 becomes a standard tool or stays a benchmark curiosity. We'll update this article as details emerge.

Last updated: Apr 2026. Benchmark data sourced from Artificial Analysis. Rankings are dynamic and may shift as new evaluations are added.